Path tracing with babylonjs // Part 1 : Solids

Path tracing is an interesting feature that can help you render things more easily than with traditional rasterizer (say, triangles).

Volumetrics, portals, parametric surfaces, subsurface scattering, ocean simulation, …

This comes at the price of more consuming GPU resources. But as GPU power increases more rapidly than CPU, this can be a win for the future.

Today, I start a serie of 3 blog posts.

This first one will be about setting up everything and the steps to get a perfect lighted sphere.

Second one will talk about volumetric effects

And the last one will be about height rendering and more complex effects.

This assumes you have almost no knowledge of it so it will take your hand to a fun journey of procedural rendering!

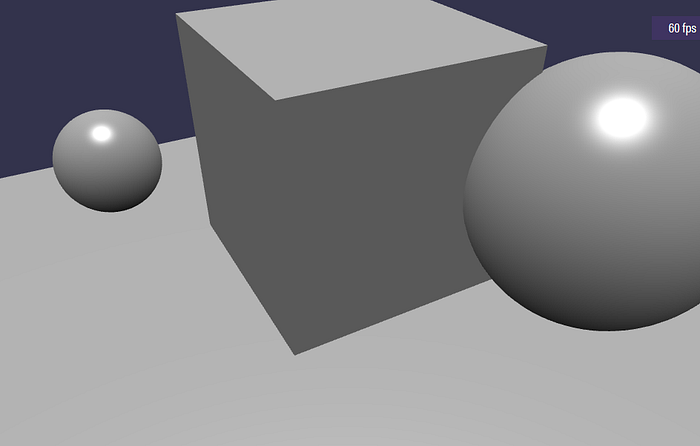

Let’s start with a simple playground with 2 spheres and a box.

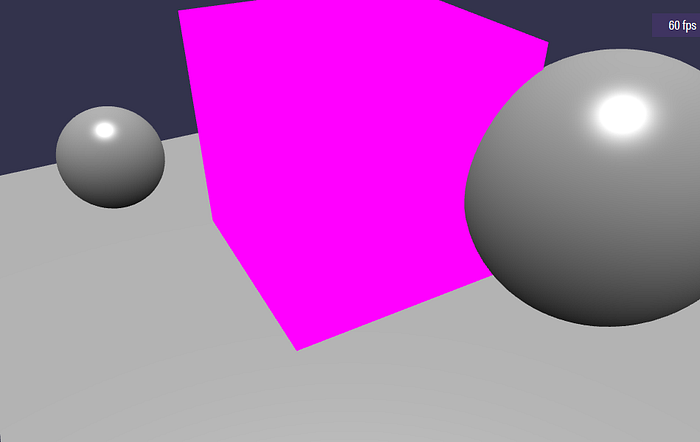

All the path tracing will happen in a Pixel shader. So let’s add one that sets a nice purple color.

The core principle of path tracing is to have a position that’s going along a line (from the eye to the infinite) and when that moving position hits a surface, then draw it.

The start position is easy to find, it the eye position. The end position might be tricky to find. But we can get a position for each pixel on the surface of the cube.

And Then, compute the unit vector as the difference of the cube surface position minus the eye position. Let’s do that and render each pixel as the value of that vector.

When you rotate the view, you’ll see changing color. That’s expected as the eye position changes.

Note the world position is computed in the vertex shader and forward to the pixel shader. That position is interpolated between the vertices for each pixel that’s rendered.

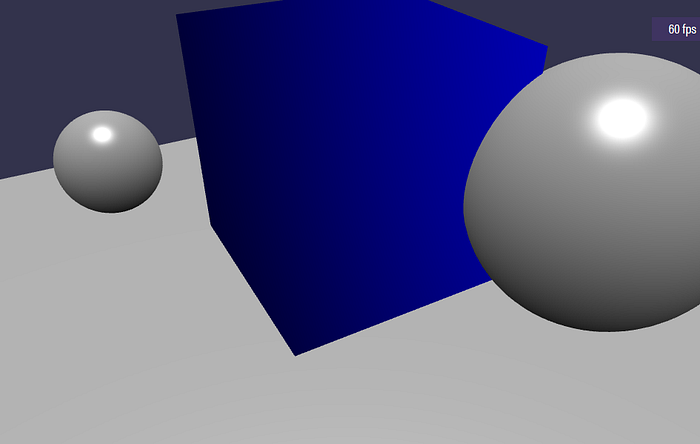

The next step is to have solid more solid to render. Let’s say a sphere (and it’s one of the most simple shape to render).

We have the world position at the surface of the cube and an imaginary sphere at position (0,1,0) and a radius of 1.1.

To render that, simply draw a white pixel if the surface world position of the pixel is inside the sphere and nothing if it’s outside.

To determine if a world position is inside a sphere, compute the distance between the sphere center and that position. If that length is lower than the sphere radius, then we are inside.

That computation is done is the ‘sphereDistance’ function. The order of operation is a bit changed to return 0 or below value if inside. And greater than 0 if outside.

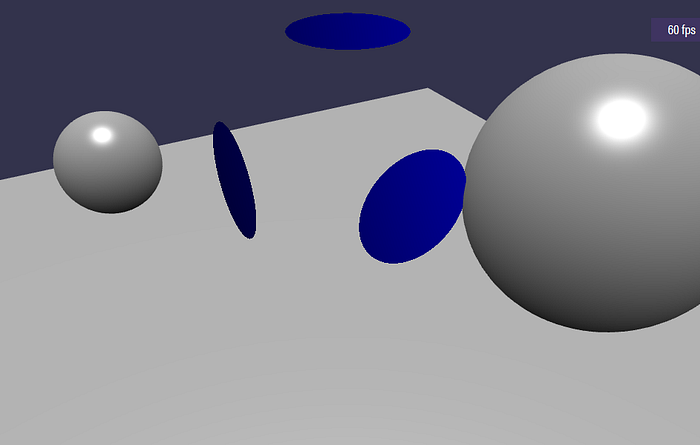

This looks like a special dice with a disk on each side.

It’s not a sphere yet. It’s the intersection of the face with the sphere. If you make a really thin slice a sphere, you’ll end up with a disk.

To make it look like a sphere, we need to use the ray direction that we talked about earlier and run a small loop to check for deeper position and not only the surface.

That’s it! We have a strangely shaded sphere!

At each step, we move along the axis and if the current position is inside the sphere, we draw a pixel and exit. If we never reach the inner of the sphere, then discard the pixel and don’t render the pixel.

Now, let’s improve the shading. We will need the normal for that. How can we get the normal and we only have the position?

Simple, when inside a sphere, the normal is the normalized vector between the sphere center and the position. From any surface of the sphere, imagine a vector that’s going inward to the direction of the center.

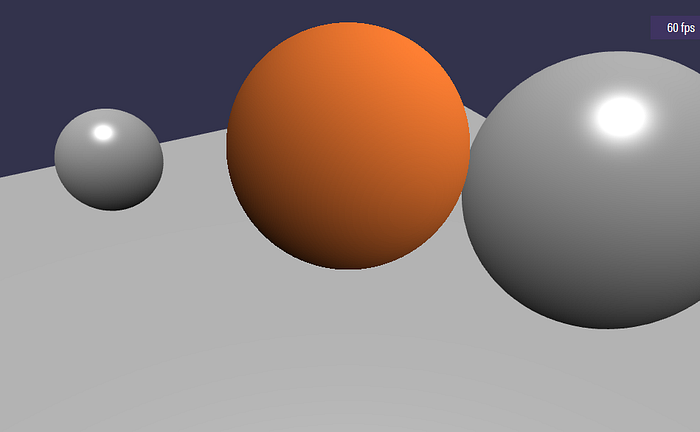

With the normal, a simple dot product with a random light direction will do the trick.

The diffuse lighting computation is only meant to have some nice rendering. It’s not linked to the babylonjs light information.

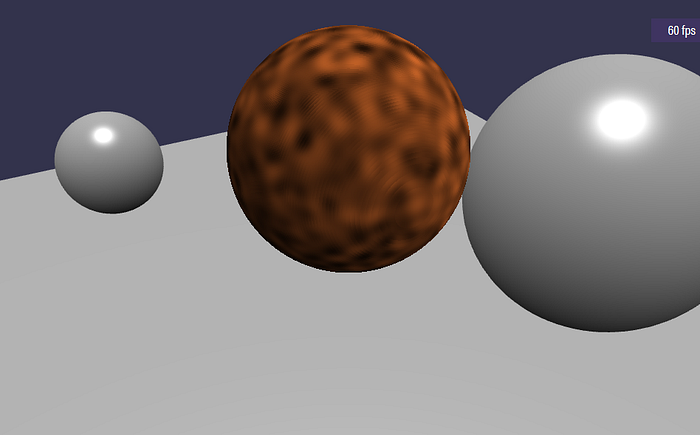

Now, let’s add a procedural texture that will make it look like raytracing experiments from the 80s.

A very important function when doing rendering is noise. A noise function returns a semi random value from a parameter. Here, we use a simplex noise.

The noise implementation is coming for this shader: https://www.shadertoy.com/view/XsX3zB and you can get more information on the math behind here : https://en.wikipedia.org/wiki/Simplex_noise

The seed for the noise will be the current world position and the value is rescaled to [0..1] and used as a diffuse texture.

There is one big annoying thing here! Did you see the rendering is like composed of slices. Actually, it is! The currentposition advances one small step at a time.

So, it acts like it’s slicing the sphere in a number of disk. Not Great!

We can do better. When we compute the pixel for the position inside the sphere, we know that the previous position is not inside the sphere.

So, the real intersection lies on a line between previousposition and current position. Also, previous distance was outside and current distance is inside.

Then previousDistance divided by ( previousDistance minus current distance) is the ratio between previous position and current position that is on the sphere. It’s an approximation but it’s better to use that interpolated position:

That’s it for Part 1! You now know enough for adding simple path tracing to your scenes. Of course, you’ll need more complex shapes than a simple sphere but just by adding small steps in the path tracing, you can improve the lighting, the texture and even animate everything with time. But that will be for part 2 and 3.

Appendix: more distance functions are described here : https://www.iquilezles.org/www/articles/distfunctions/distfunctions.htm