Ray Marching in the Babylon.js Node Material Editor

We made a number of updates to Babylon.js to support ray marching in the Node Materials Editor (NME) nearly a year ago, but we never publicly explained what it was and how to take advantage of it, so let’s take an in-depth look at this new feature!

What is ray marching?

Ray marching is a rendering technique used in computer graphics, with certain similarities to ray tracing. This is a method of rendering 3D scenes, often with complex, volumetric objects, which involves simulating the trajectory of rays emanating from the camera through a scene to determine how they interact with objects and surfaces. The main idea behind ray marching is to move along a ray from the camera’s perspective, step by step, to determine the intersection points with objects in the scene.

Here’s how ray marching typically works:

- Camera and Rays: You have a virtual camera that defines the viewpoint. Rays are cast from the camera into the scene.

- Iteration: You start at the camera’s position and move along the ray step by step in small increments. At each step, you calculate the distance to the nearest object in the scene.

- Distance Estimation: To find this distance, you often use a mathematical function or algorithm that estimates the distance to the nearest object. This function is usually defined by the geometry of the objects in the scene. Each object can be represented by a signed distance function (SDF). The sign of the return value indicates whether the point is inside or outside the object. Note that in addition to mathematical functions, you can also use a 3D texture that stores pre-calculated distances from the volume we want to render, for maximum flexibility.

- Intersection: When the estimated distance is sufficiently small (i.e., the ray is very close to or intersects an object’s surface), you consider that point as an intersection with the object. You can then calculate lighting, shading, and other visual properties at that point.

- Color and Rendering: You accumulate the color and properties of the objects along the ray as you move through the scene. This allows you to render complex scenes with various objects and lighting conditions.

Ray marching is commonly used for rendering fractals, volumetric effects, and scenes with complex, procedural objects. It’s flexible and can handle a wide range of situations, but it can be computationally intensive, especially for complex scenes or objects with intricate geometry. It’s also less easy to create models by manipulating mathematical functions, as artists are more familiar with traditional DCC tools that generate triangular models.

Shadertoy is probably the best place to see ray marching in action, as most of the shaders there use ray marching! Inigo Quilez (who is one of the creators of Shadertoy) has many resources on SDF and ray marching, which you can access from this page. You can also browse all his works from this page (the snail below is just one of his incredible creations!).

Ray marching in Babylon.js

You’ve been able to use ray marching in Babylon.js for a long time (in fact, ever since Babylon.js was created!), because all you have to do is place your code in a fragment shader to make it work! Over the years, a number of our forum users have ported some of the Shadertoy’s examples to Babylon.js, or created their own examples (search for “shadertoy” or “ray marching” in the forums).

To illustrate this, here’s the Snail shader above running in Babylon.js (beware, it may be slow on your computer as it puts a strain on the GPU! But it’s a snail after all…): https://playground.babylonjs.com/#8Z0MKW#36

This is a copy/paste of the original shader code. The only thing to do is to pass the (uniform) variables that the code expects (iResolution, iTime, iFrame and the three textures).

As you can see, you can get some absolutely stunning images! However, there’s a big problem: this scene is generated by code alone (the shader is over 800 lines long!) and can’t be integrated with other regular scene models in Babylon.js, since everything is generated in the fragment shader. For better integration into an existing scene, you’d need the depth buffer to be updated with the correct values, as well as the shadow map(s) if you want your ray-marched objects to generate shadows on existing objects. This is where ray marching support for node materials can help.

Supporting ray marching in node materials

The most important change we had to make to support ray marching in node materials was to allow some of the existing blocks to generate all their shader code in the fragment shader only. This is because everything happens at pixel level with the ray marching algorithm, so no code must be generated in the vertex shader. The blocks we’ve updated are as follows:

- Lights: the block used to create node materials that behave like the standard material

- PBRMetallicRoughnessBlock: the block used to create PBR materials

- ReflectionBlock: the block used by the reflection input of the PBRMetallicRoughnessBlock.

In all three cases, we’ve added a “Generate only fragment code” switch on the block, which you need to activate if you want to use these blocks as part of a ray marching material:

This switch is not activated by default, firstly to preserve backward compatibility, and secondly because it’s generally more efficient to run code in the vertex shader rather than the fragment shader, where possible.

For integration into an existing scene, we also need to generate a fragment depth based on the position generated by the ray marching algorithm. We’ve added a FragDepthBlock that you can feed either with the world position and viewProjection matrix, or directly with the depth at that pixel:

Here’s everything you need to create ray marching materials in the NME!

Basic ray marching node material

One important thing to understand is that we’ve unlocked the ability to do ray marching in NME, but there’s no pre-existing “RayMarchingBlock” node! You still need to provide the code that implements ray marching yourself.

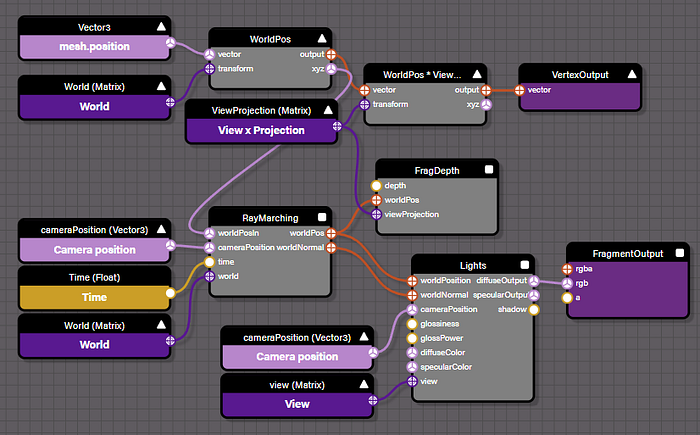

By way of illustration, here’s a node material that implements a very basic rendering of a sphere + box with ray marching (https://nme.babylonjs.com/#GD8DSL#26):

Output:

As you can see, it’s quite simple: we use the output of the block named “RayMarching” to write the depth (thanks to the FragDepth block) and to generate the lighting (thanks to the Lights block).

The RayMarching block is a custom block, for which we have provided the glsl code:

As you can see, this is a very basic implementation of the ray marching algorithm (for the sake of clarity, I haven’t shown the sdf function (line 9), which simply calculates the distance between a point and the objects in the scene — you can export the material in a .json file from the NME and see the complete source there). The code calculates the position and normal in world coordinates and returns them as output parameters, which can then be used by other blocks in the graph editor.

Improving the rendering

A ray marching algorithm doesn’t usually provide uv coordinates, it calculates the final lighting itself. In our case, where we want better integration into existing scenes, we’d like to apply the lighting ourselves through the Lights block or PBRMetallicRoughness. As uvs are difficult to generate, it’s best to use an automated process to apply texture to a mesh. To this end, we’ve created the TriPlanar block, which can project a texture onto any object, given a position (world) and a normal. We’ve also added a BiPlanar block, which is a little more performant than TriPlanar but can introduce more artifacts.

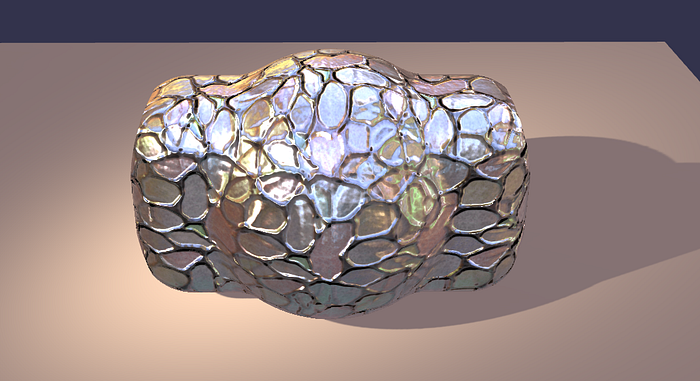

Here’s what you get when you add a TriPlanar block to the previous example (https://nme.babylonjs.com/#GD8DSL#27):

Another enhancement is to generate shadows for these ray marched objects. This is a little more complicated, as you’ll need to create an additional node material that will be used to generate the object in the light shadow map. To enable this use case, we’ve added a ShadowMap block to the NME.

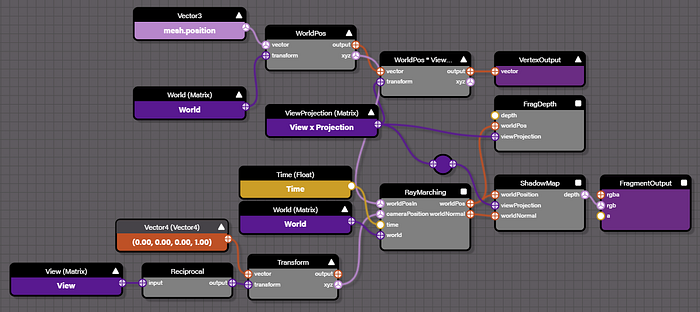

Here’s what this material looks like to support shadows in the example we’ve built so far (https://nme.babylonjs.com/#E3K99P):

It’s quite simple: we use the output of the RayMarching block as input to the ShadowMap block, and use the depth generated by this block as the final value generated by this material: depth (in view space) is what a shadow map stores.

The RayMarching.cameraPosition input requires some explanation. The “Camera position” input block gives you the camera’s position (in world space). This would seem to be the right block to connect to the RayMarching.cameraPosition input, but it’s not! In fact, when generating the shadow map, the camera position should be the light position (we’re generating the shadow map from the light’s point of view). The simplest way to calculate this position is to take the inverse of the view matrix (which is the view matrix of the light) and transform the position (0,0,0,1) (which is the position of the light in view space) by this matrix. We could also have added a “lightPosition” input to the material and filled it from the outside (this would be more efficient).

Note that light position is only used if the light is a spot or point light. If the light is a directional light, there’s a special code at the beginning of the raymarch function to handle this case:

In this case, the rays start from the world’s current position and are directed in the direction of the light (given by lightDataSM).

This material must be wrapped in a ShadowDepthWrapper so that it can be used when generating the shadow map.

Here’s the final playground, encompassing everything we’ve discussed so far:

https://playground.babylonjs.com/frame.html?#M3QR7E#61

To avoid ending on the dreaded “coder art”, here’s our friend the snail integrated into a Babylon playground (https://playground.babylonjs.com/#M3QR7E#78):

Conclusion

Ray marching is a great technique for generating impressive images, and you can now use it in the Node Material Editor!

Don’t hesitate to share your experiences on our forums — we love seeing what our users can come up with with the tools we put at their disposal!

Popov — Babylon.js Team